We’ve been working to make Flickr faster for our users around the world. Since the primary photo storage locations are in the US, and information on the internet travels at a finite speed, the farther away a Flickr user is located from the US, the slower Flickr’s response time will be. Recently, we looked at opportunities to improve this situation. One of the improvements involves keeping temporary copies of recently viewed photos in locations nearer to users. The other improvement aims to get a benefit from these caches even when a user views a photo that is not already in the cache.

Regional Photo Caches

For a few years, we’ve deployed regional photo caches located in Switzerland and Singapore. Here’s how this works. When one of our users in Vietnam requests a photo, we copy it temporarily to Singapore. When a second user requests the same photo, from, say, Kuala Lumpur, the photo is already present in Singapore. Flickr can respond much faster using this copy (only a few hundred kilometers away) instead of using the original file back in the US (over 8,000 km away).

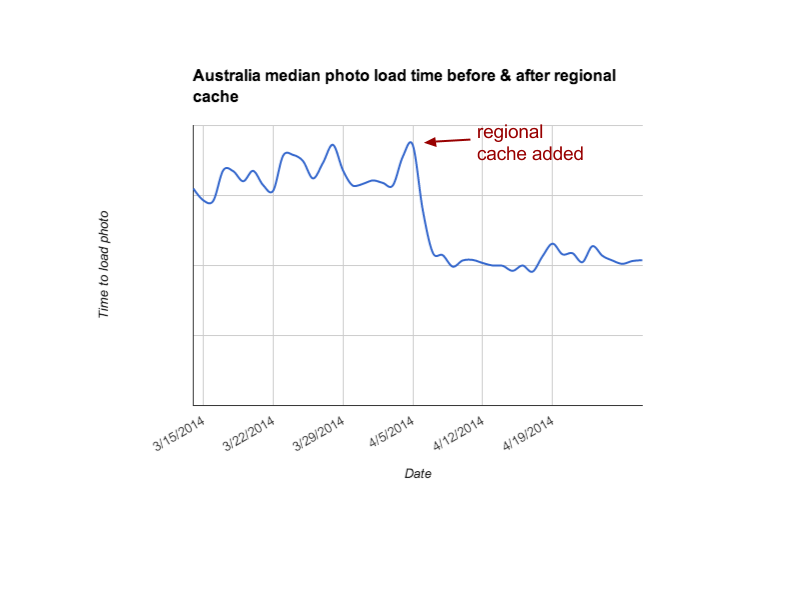

The first piece of our solution has been to create additional caches closer to our users. We expanded our regional cache footprint around two months ago. Our Australian users, among others, should now see dramatically faster load times. Australian users will now see the average image load about twice as fast as it did in March.

We’re happy with this improvement and we’re planning to add more regional caches over the next several months to help users in other regions.

Cache Prefetch

When users in locations far from the US view photos that are already in the cache, the speedup can be up to 10x, but only for the second and subsequent viewers. The first viewer still has to wait for the file to travel all the way from the US. This is important because there are so many photos on Flickr that are viewed infrequently. It’s likely that a given photo will not be present in the cache. One example is a user looking at their Auto Upload album. Auto uploaded photos are all private initially. Scrolling through this album, it’s likely that very few of the photos will be in their regional cache, since no other users would have been able to see them yet.

It turns out that we can even help the first viewer of a photo using a trick called cache warming.

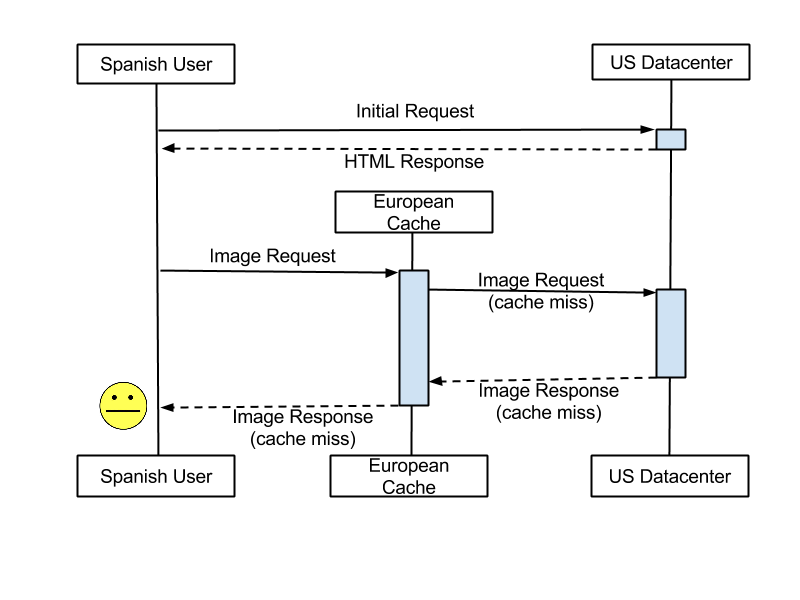

To understand how caching warming works, you need to understand a bit about how we serve images. For example, say that I’m a user in Spain trying to access the photostream of a user, Martin Brock, in the US. When my request for Martin Brock’s Photostream at https://www.flickr.com/photos/martinbrock/ hits our backend servers, our code quickly determines the most recent photos Martin has uploaded that are visible to me, which sizes will fit best in my browser, and the URLs of those images. It then sends me the list of those URLs in an HTML response. The user’s web browser reads the HTML, finds the image URLs and starts loading them from the closest regional cache.

Standard image fetch

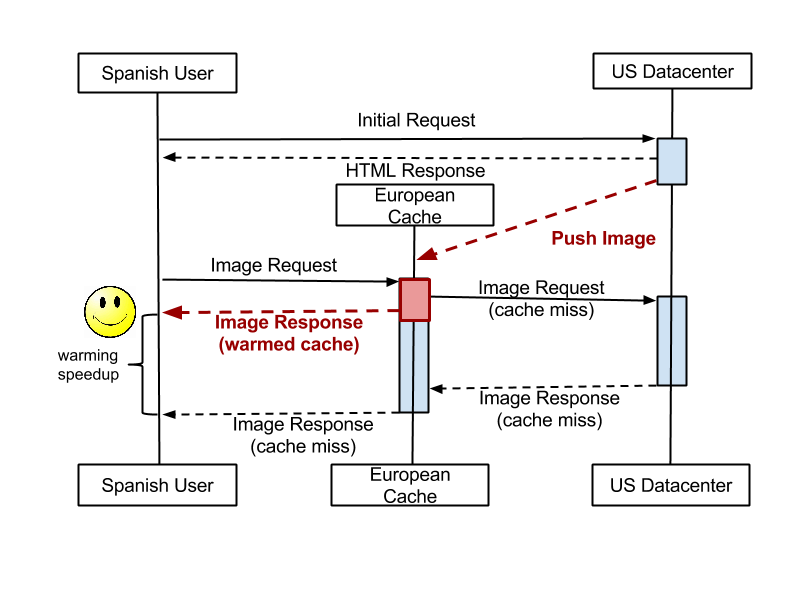

So you’re probably already guessing how to speed things up. The trick is to take advantage of the time in between when the server knows which images will be needed and the time when the browser starts loading them from the closest cache. This period of time can be in the range of hundreds of milliseconds. We saw an opportunity during this time to send the needed images over to the viewer’s regional cache in advance of their browser requesting the images. If we can “win the race” to do this, the viewer’s experience will be much faster, since images will load from the local cache instead of loading from the US.

To take advantage of this opportunity, we created a new “cache warming” process called The Warmer. Once we’ve determined which images will be requested (the first few photos in Martin’s photostream) we send a message from the API servers to The Warmer.

The Warmer listens for messages and, based on the user’s location, it determines from which of the Flickr regional caches the user will likely request the image. It then pushes the image out to this cache.

Optimized image fetch, with cache warming path indicated in red

Getting this to work well required a few optimizations.

Persistent connections

Yahoo encrypts all traffic between our data centers. This is great for security, but the time to set up a secure connection can be considerable. In our first iteration of The Warmer, this set up time was so long that we rarely got the photo to the cache in time to benefit a user. To eliminate this cost, we used an Nginx proxy which maintains persistent connections to our remote data centers. When we need to push an image out – a secure connection is already set up and waiting to be used.

Transport layer

The next optimization we made helped us reduce the cost of sending messages to The Warmer. Since the data we’re sending always fits in one datagram, and we also don’t care too much if a small percentage of these messages are never received, we don’t need any of the socket and connection features of TCP. So instead of using HTTP, we created a simple JSON format for sending messages using UDP datagrams. Another reason we chose to use UDP is that if The Warmer is not available or is reacting slowly, we don’t want that to cause slowdowns in the API.

Queue management

Naturally, some images are quite popular and it would waste resources to push them to the same cache repeatedly. So, the third optimization we applied was to maintain a list of recently pushed images in The Warmer. This simple “de-deduplication” cut the number of requests made by The Warmer by 60%. Similarly, The Warmer drops any incoming requests that are more than fifty milliseconds old. This “time-to-live” provides a safety valve in case The Warmer has fallen behind and can’t catch up.

def warm_up_url(params):

requested_jpg = params['jpg']

colo_to_warm = params['colo_to_warm']

curl = "curl -H 'Host: " + colo_to_warm + "' '" + keepalive_proxy + "/" + requested_jpg + "'"

os.system(curl)

if __name__ == '__main__':

# create the worker pool

from multiprocessing.pool import ThreadPool

worker_pool = ThreadPool(processes=100)

while True:

# receive requests

json_data, addr = sock.recvfrom(2048)

params = json.loads(json_data)

requested_jpg = warm_params['jpg']

colo_to_warm =

determine_colo_to_warm(params['http_endpoint'])

if recently_warmed(colo_to_warm, requested_jpg) :

continue

if request_too_old(params) :

continue

# warm up urls

params['colo_to_warm'] = colo_to_warm

warm_result = worker_pool.apply_async(warm_up_url,(params,))

Cache Warmer pseudocode

Java

Our initial implementation of the Warmer was in Python, using a ThreadPool. This allowed very rapid prototyping and worked great — up to a point. Profiling the Python code, we found a large portion of time spent in socket calls. Since there is so little code in The Warmer, we tried porting to Java. A nearly line-for-line translation resulted in a greater than 10x increase in capacity.

Results

When we began this process, we weren’t sure whether The Warmer would be able to populate caches before the user requests came in. We were pleasantly surprised when we first enabled it at scale. In the first region where we’ve deployed The Warmer (Western Europe), we observed a reduced median latency of more than 200 ms, 95% of photos requests sped up by at least 100 ms, and for a small percentage of photos we see over 400 ms reduction in latency. As we continue to deploy The Warmer in additional regions, we expect to see similar improvements.

Next Steps

In addition to deploying more regional photo caches and continuing to improve prefetching performance, we’re looking at a few more techniques to make photos load faster.

Compression

Overall Flickr uses a light touch on compression. This results in excellent image quality at the cost of relatively large file sizes. This translates directly into longer load times for users. With a growing number of our users connecting to Flickr with wireless devices, we want to make sure we can give users a good experience regardless of whether they have a high-speed LTE connection or two-bars of 3G in the countryside. An important goal will be to make these changes with little or no loss in image quality.

We are also testing alternative image encoding formats (like WebP). Under certain conditions WebP compression may offer better image quality at the same compression ratio than JPEG can achieve.

Geolocation and routing

It turns out it’s not straightforward to know which photo cache is going to give the best performance for a user. It depends on a lot of factors, many of which change over time — sometimes suddenly. We think the best way to do this is with a system that adapts dynamically to “Internet weather.”

Cache intelligence

Today, if a user needs to see a medium sized version of an image, and that version is not already present in the cache, the user will need to wait to retrieve the image from the US, even if a larger version of the image is already in the cache. In this case, there is an opportunity to create the smaller version at the cache layer and avoid the round-trip to the US.

Overall we’re happy with these improvements and we’re excited about the additional opportunities we have to continue to make the Flickr experience super fast for our users. Thanks for following along.