Yesterday we released a new people selector widget (which we’ve been calling Bo Selecta internally). This widget downloads a list of all of your contacts, in JavaScript, in under 200ms (this is true even for members with 10,000+ contacts). In order to get this level of performance, we had to completely rethink how we send data from the server to the client.

Server Side: Cache Everything

To make this data available quickly from the server, we maintain and update a per-member cache in our database, where we store each member’s contact list in a text blob — this way it’s a single quick DB query to retrieve it. We can format this blob in any way we want: XML, JSON, etc. Whenever a member updates their information, we update the cache for all of their contacts. Since a single member who changes their contact information can require updating the contacts cache for hundreds or even thousands of other members, we rely upon prioritized tasks in our offline queue system.

Testing the Performance of Different Data Formats

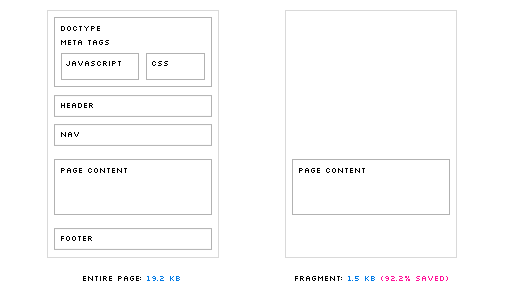

Despite the fact that our backend system can deliver the contact list data very quickly, we still don’t want to unnecessarily fetch it for each page load. This means that we need to defer loading until it’s needed, and that we have to be able to request, download, and parse the contact list in the amount of time it takes a member to go from hovering over a text field to typing a name.

With this goal in mind, we started testing various data formats, and recording the average amount of time it took to download and parse each one. We started with Ajax and XML; this proved to be the slowest by far, so much so that the larger test cases wouldn’t even run to completion (the tags used to create the XML structure also added a lot of weight to the filesize). It appeared that using XML was out of the question.

BoSelectaJsonGoodFunTimes: eval() is Slow

Next we tried using Ajax to fetch the list in the JSON format (and having eval() parse it). This was a major improvement, both in terms of filesize across the wire and parse time.

While all of our tests ran to completion (even the 10,000 contacts case), parse time per contact was not the same for each case; it geometrically increased as we increased the number of contacts, up to the point where the 10,000 contact case took over 80 seconds to parse — 400 times slower than our goal of 200ms. It seemed that JavaScript had a problem manipulating and eval()ing very large strings, so this approach wasn’t going to work either.

| Contacts | File Size (KB) | Parse Time (ms) | File Size per Contact (KB) | Parse Time per Contact (ms) |

|---|---|---|---|---|

| 10,617 | 1536 | 81312 | 0.14 | 7.66 |

| 4,878 | 681 | 18842 | 0.14 | 3.86 |

| 2,979 | 393 | 6987 | 0.13 | 2.35 |

| 1,914 | 263 | 3381 | 0.14 | 1.77 |

| 1,363 | 177 | 1837 | 0.13 | 1.35 |

| 798 | 109 | 852 | 0.14 | 1.07 |

| 644 | 86 | 611 | 0.13 | 0.95 |

| 325 | 44 | 252 | 0.14 | 0.78 |

| 260 | 36 | 205 | 0.14 | 0.79 |

| 165 | 24 | 111 | 0.15 | 0.67 |

JSON and Dynamic Script Tags: Fast but Insecure

Working with the theory that large string manipulation was the problem with the last approach, we switched from using Ajax to instead fetching the data using a dynamically generated script tag. This means that the contact data was never treated as string, and was instead executed as soon as it was downloaded, just like any other JavaScript file. The difference in performance was shocking: 89ms to parse 10,000 contacts (a reduction of 3 orders of magnitude), while the smallest case of 172 contacts only took 6ms. The parse time per contact actually decreased the larger the list became. This approach looked perfect, except for one thing: in order for this JSON to be executed, we had to wrap it in a callback method. Since it’s executable code, any website in the world could use the same approach to download a Flickr member’s contact list. This was a deal breaker.

| Contacts | File Size (KB) | Parse Time (ms) | File Size per Contact (KB) | Parse Time per Contact (ms) |

|---|---|---|---|---|

| 10,709 | 1105 | 89 | 0.10 | 0.01 |

| 4,877 | 508 | 41 | 0.10 | 0.01 |

| 2,979 | 308 | 26 | 0.10 | 0.01 |

| 1,915 | 197 | 19 | 0.10 | 0.01 |

| 1,363 | 140 | 15 | 0.10 | 0.01 |

| 800 | 83 | 11 | 0.10 | 0.01 |

| 644 | 67 | 9 | 0.10 | 0.01 |

| 325 | 35 | 8 | 0.11 | 0.02 |

| 260 | 27 | 7 | 0.10 | 0.03 |

| 172 | 18 | 6 | 0.10 | 0.03 |

Going Custom

Having set the performance bar pretty high with the last approach, we dove into custom data formats. The challenge would be to create a format that we could parse ourselves, using JavaScript’s String and RegExp methods, that would also match the speed of JSON executed natively. This would allow us to use Ajax again, but keep the data restricted to our domain.

Since we had already discovered that some methods of string manipulation didn’t perform well on large strings, we restricted ourselves to a method that we knew to be fast: split(). We used control characters to delimit each contact, and a different control character to delimit the fields within each contact. This allowed us to parse the string into contact objects with one split, then loop through that array and split again on each string.

that.contacts = o.responseText.split("\c");

for (var n = 0, len = that.contacts.length, contactSplit; n < len; n++) {

contactSplit = that.contacts[n].split("\a");

that.contacts[n] = {};

that.contacts[n].n = contactSplit[0];

that.contacts[n].e = contactSplit[1];

that.contacts[n].u = contactSplit[2];

that.contacts[n].r = contactSplit[3];

that.contacts[n].s = contactSplit[4];

that.contacts[n].f = contactSplit[5];

that.contacts[n].a = contactSplit[6];

that.contacts[n].d = contactSplit[7];

that.contacts[n].y = contactSplit[8];

}

Though this technique sounds like it would be slow, it actually performed on par with native JSON parsing (it was a little faster for cases containing less than 1000 contacts, and a little slower for those over 1000). It also had the smallest filesize: 80% the size of the JSON data for the same number of contacts. This is the format that we ended up using.

| Contacts | File Size (KB) | Parse Time (ms) | File Size per Contact (KB) | Parse Time per Contact (ms) |

|---|---|---|---|---|

| 10,741 | 818 | 173 | 0.08 | 0.02 |

| 4,877 | 375 | 50 | 0.08 | 0.01 |

| 2,979 | 208 | 34 | 0.07 | 0.01 |

| 1,916 | 144 | 21 | 0.08 | 0.01 |

| 1,363 | 93 | 16 | 0.07 | 0.01 |

| 800 | 58 | 10 | 0.07 | 0.01 |

| 644 | 46 | 8 | 0.07 | 0.01 |

| 325 | 24 | 4 | 0.07 | 0.01 |

| 260 | 14 | 3 | 0.05 | 0.01 |

| 160 | 13 | 3 | 0.08 | 0.02 |

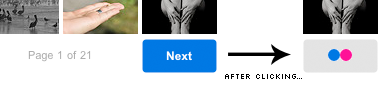

Searching

Now that we have a giant array of contacts in JavaScript, we needed a way to search through them and select one. For this, we used YUI’s excellent AutoComplete widget. To get the data into the widget, we created a DataSource object that would execute a function to get results. This function simply looped through our contact array and matched the given query against four different properties of each contact, using a regular expression (RegExp objects turned out to be extremely well-suited for this, with the average search time for the 10,000 contacts case coming in under 38ms). After the results were collected, the AutoComplete widget took care of everything else, including caching the results.

There was one optimization we made to our AutoComplete configuration that was particularly effective. Regardless of how much we optimized our search method, we could never get results to return in less than 200ms (even for trivially small numbers of contacts). After a lot of profiling and hair pulling, we found the queryDelay setting. This is set to 200ms by default, and artificially delays every search in order to reduce UI flicker for quick typists. After setting that to 0, we found our search times improved dramatically.

The End Result

Head over to your Contact List page and give it a whirl. We are also using the Bo Selecta with FlickrMail and the Share This widget on each photo page.